[updated 30 March, 2023 - see the end of the post]

The question of Copyright and AI scanners can thundering home recently when I learned that my Flickr images have been included in at least two major AI datasets. So, I submitted the following Help Request to Flick.com -

Dear Sirs,

Using tools available to see if my images have been consumed by "AI" scanners I see that, yes, indeed, my Flickr images have been scanned.

I am very aware of Copyright and it's limitations. I used to advise legal council at the companies I worked for in technology. I did NOT give anyone the right to scan and then use my works.

I hold copyrights to all but one of my images, and I believe there may be a serious issue by allowing "AI" scanners to do what they are doing.

What is Flickr's position on this?

Indeed, among may other tasks, I helped advise the lawyers at the last company I worked for. I'm fairly familiar with what is and is not allowed, and where the gray areas are in copyright law and its application. Perhaps Flickr had developed a position on the topic?

Their reply, from what I can tell, is likely a lawyer reviewed/sanitized/canned message that gets sent to everyone who asks about AI -

"Hi there,

Thank you for your input on this matter.

As this is a new and emerging space, we have not yet fully reviewed how

Flickr will fit with AI images and photography.

At this time, there have

not been any changes to the Copyright Act to address AI generated

images.

It is something we are reviewing closely and should there be any changes

that would affect the whole platform, we will certainly notify our

members.

As always, if there are instances where a creator identifies their

copyright or license has taken place, they can submit a claim through

our DMCA process for review and actions here.

Best,

Doug

We're updating the terms of Flickr's free accounts to strengthen our

community and the long-term stability of Flickr. Read more here."

First, I fail to see where there is something unique about AI that could require legal redefinition/reapplication. Secondly, Flickr recently announced a new area of AI generated images where people can post the output of AI software.

It feels as if Flickr is giving this a rather big "pass."

Class Action lawsuits have been filed and I wondered how to join. One site I visited indicated that _normally_

"...you don't need to do anything to "join" a class action. If your legal rights are affected by a class action, you usually will only need to get involved once the case settles. In most cases, you will need to submit a claim, either online or through the mail, to receive your portion of the settlement or judgment..."

Digging into Flickr's past I found that four years ago it was known that Flickr had been scrapped by an AI in a project that involved IBM.

"...“This is the dirty little secret of AI training sets. Researchers often just grab whatever images are available in the wild,” said NYU School of Law professor Jason Schultz..."

One more thing. There was an article published back in 2018 on scrapping Flickr for "deep learning experiments", complete with code to implement a scrapper where it was noted that -

"...1. It is not legal and ethical to scrape some websites

2. Even if you were not concerned about the law or ethics, scrapping at scale can be challenging particularly if there are safeguards in place to discourage scrapping..."

Which begs the question: Did Flickr allow scrappers/scanners, or did

they just not care and let it happen? Afterall, they'd published an API that people could with as they pleased.

Any way I look at it I'm wondering if the benefit stills photographers in the "Pro" program _pay_ for out weighs the huge issue of creative copyrighted works AI poses and has posed for several years. Flickr may on some level be culpable.

I follow a number of tech websites to try and keep current with the State of Things in science and technology. Something very concerning turned up just the other day (March 24, 2023 to be exact).

After listening to the podcast I came to the understanding and realization that the problems posed by AI far out-strip the scanning of our images.

AI is out of control and, if not contained, might pose serious risks to us. As this fire is building to disturbingly large proportions, Microsoft has laid off key personnel from their AI Ethics team. Isn't _now_ the time to staff-up ethics teams on AI? What the h*ll is going on?

Ack! Something very important is happening right this moment and I've not been following the ethical and moral implications of AI as closely as I could have.

If you're at all interested in this topic, have a listen.

[Update 30 March, 2023] - Some people are calling for an outright ban on AI.

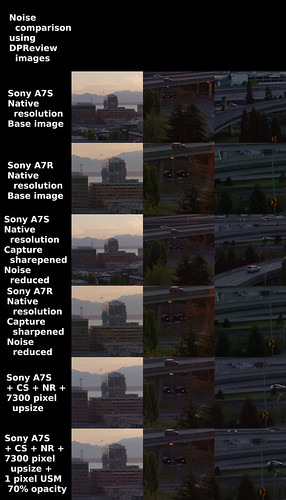

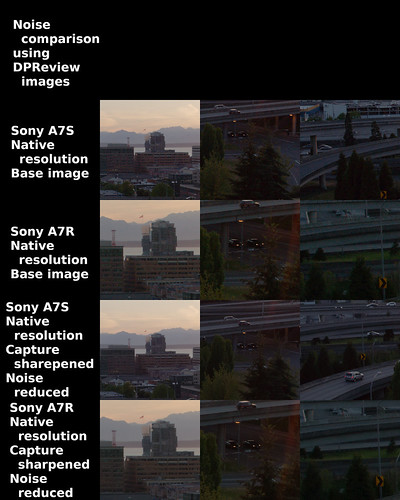

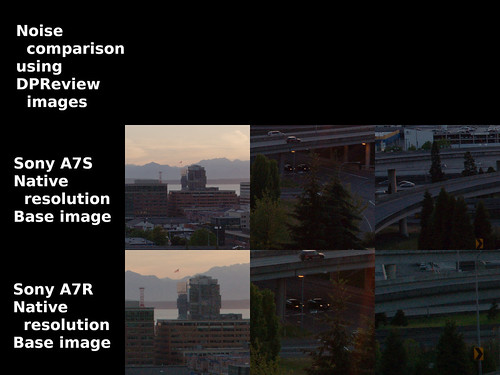

Yes,the images used to illustrate this blog entry

were carefully chosen to set the mood